Building a Multi-Sensor Long-Range Measurement System: Lessons from Integrating Optoelectronics and Edge AI

Why We Built This

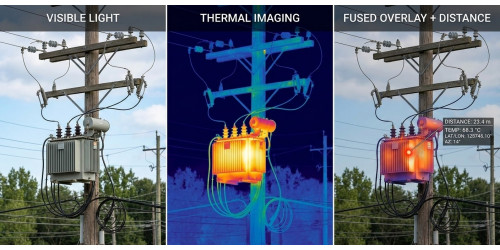

Precision distance measurement and multi-sensor imaging are both well-understood disciplines in isolation. What is less commonly documented is what happens when you try to fuse them into a single coherent system — one where a thermal anomaly, a visual confirmation, and a laser-measured distance all land in the same operator interface, derived from the same moment in time, without manual correlation.

That is the problem our team set out to tackle with this project. The goal was to design and validate a working integration of visible imaging, thermal sensing, and laser ranging on a single edge computing platform — and to understand, through hands-on experience, where the real engineering challenges lie. Not in any individual sensor, but in the seams between them.

This post documents the concept design, the component choices we made and why, the measurement framework we implemented, and some of the practical lessons that emerged along the way.

The Concept: Sensor Fusion as a First-Class Design Goal

The starting premise was straightforward: a single-sensor system is always brittle at the edges of its operating envelope. A visible camera fails in darkness. A thermal camera lacks the spatial resolution for fine structural detail. A laser rangefinder gives you a distance but no context for what you just measured. Each sensor has a blind spot that another can fill.

The more interesting design question is not whether to combine them, but how to combine them in a way that produces something genuinely more useful than the sum of its parts — rather than just a box with three separate instruments inside it that happen to share a power supply.

Our design approach centered on a few principles:

- Measurement coherence over raw capability: Rather than maximizing the specification of each individual sensor, we prioritized making the outputs spatially and temporally consistent. A thermal image and a visible image acquired at different moments, or with uncalibrated fields of view, are hard to reason about together. Getting the synchronization and coordinate mapping right was a higher priority than chasing sensor specs.

- Derived parameters, not raw data: The system was designed to produce interpretable outputs — horizontal distance, height difference, elevation angle — rather than exposing raw sensor data for a downstream system to process. This pushed more computation onto the edge platform and made the integration challenge more interesting.

- Industrial deployment realism: We designed for conditions that field-deployed systems actually encounter: variable ambient light, temperature extremes, vibration, and operators who need information quickly rather than instrument readings they have to interpret themselves.

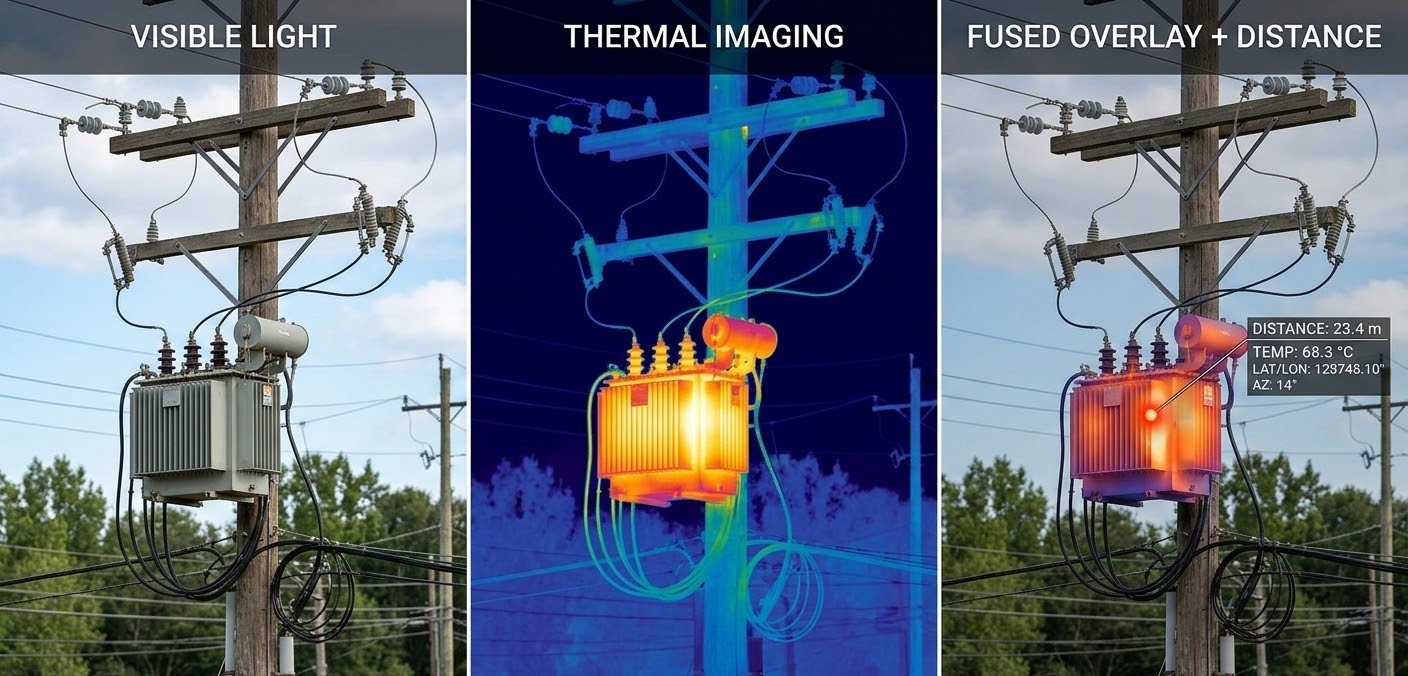

System Architecture

The platform consists of four integrated subsystems:

- Embedded Edge Computing Platform: system orchestration, sensor fusion, real-time computation

- Visible Light Camera Module: high-resolution optical observation with motorized zoom

- Thermal Imaging Module: passive infrared sensing for all-conditions operation

- Laser Rangefinder Module: slant distance plus angle sensing, enabling calculation of horizontal distance and target height

The embedded computer is the integrating layer. All three sensor modules feed into it, and it is responsible for synchronization, fusion, measurement derivation, and display output. Getting this layer right — the firmware, the interface timing, the data pipeline — turned out to be where most of the interesting engineering work lived.

Component Selection and Rationale

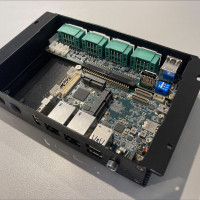

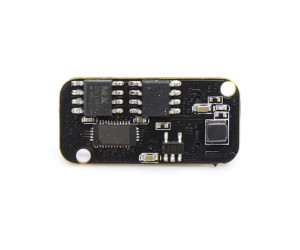

Edge Computing: NXP i.MX8M Plus

We selected the NXP i.MX8M Plus as the computing platform. The choice was deliberate: the i.MX8M Plus combines a quad-core Arm Cortex-A53 application processor, a Cortex-M7 real-time core, and an on-chip NPU rated at 2.3 TOPS — all in a power envelope that suits a compact, field-deployable enclosure.

The Cortex-M7 real-time core was particularly relevant for this project. Sensor synchronization — ensuring that the laser measurement, thermal frame, and visible frame are acquired with consistent timing — benefits from a deterministic real-time execution environment that a general-purpose Linux process scheduler cannot reliably provide. Having both cores on the same SoC, sharing memory, simplified the architecture considerably compared to a split processor design.

The NPU was not heavily exercised in this initial integration — the current implementation focuses on measurement derivation rather than inference — but its presence represents meaningful headroom. Object detection on the visible or thermal stream, automated target identification, or anomaly flagging are natural extensions that the hardware can support without a platform change. This was an intentional forward-looking choice in the component selection.

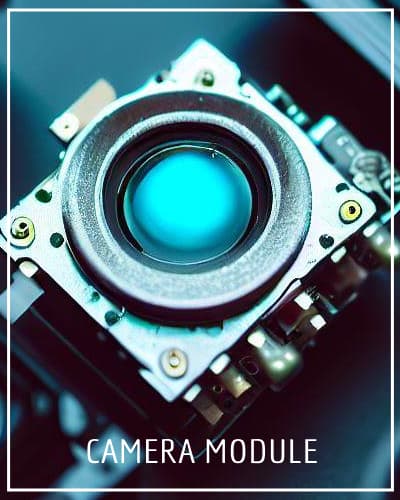

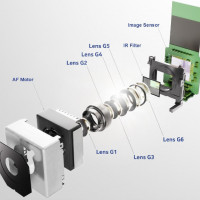

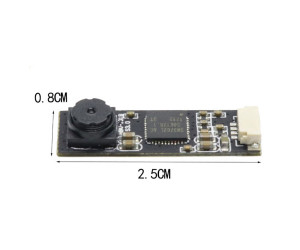

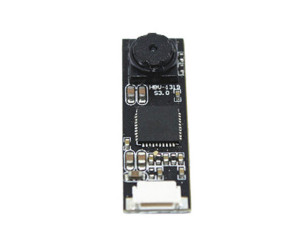

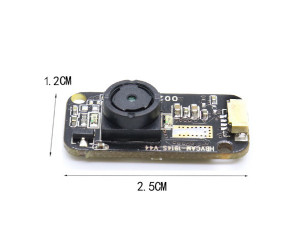

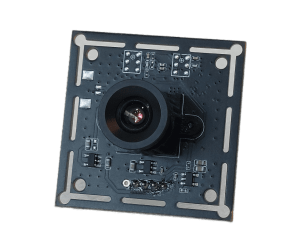

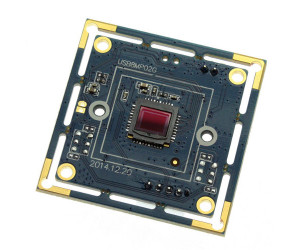

Visible Camera: ZCM8M30M33QX10

The visible camera module gave us 8MP resolution with motorized 10× optical zoom. The optical zoom capability was important for the use cases we had in mind: at long working distances, you need telephoto reach to visually confirm what the laser is pointing at. Digital zoom degrades the image precisely when you need it most — at range — so optical zoom was a hard requirement, not a nice-to-have.

In practice, integrating a motorized zoom camera into the system created an interesting alignment problem: the field of view changes with zoom level, but the laser rangefinder has a fixed boresight. Keeping the operator's visual frame of reference coherent with the measurement axis across zoom levels required careful attention to the display overlay logic.

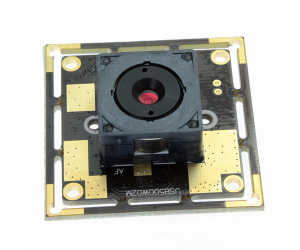

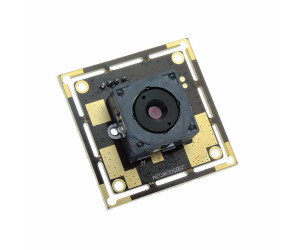

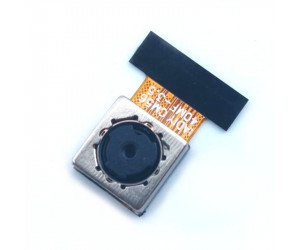

Thermal Camera: TCM300K50U1-FL35

The thermal module is a 640×512 uncooled infrared sensor with a 35mm lens — a relatively narrow field of view, chosen to match the long-range observation geometry of the visible camera rather than provide wide-area thermal scanning.

Working with the thermal channel taught us something we expected intellectually but had to experience practically: thermal image interpretation is genuinely different from visible image interpretation, and the two channels do not always agree on what is interesting in a scene. A hot bearing on a piece of machinery is obvious in thermal and invisible in visible. A structural crack is visible in a high-resolution optical image and essentially absent in thermal. Learning to present both channels in a way that helps an operator reason about the scene — rather than creating information overload — is a real UX challenge, not just a sensor integration problem.

The 35mm focal length also means the thermal field of view is narrower than a typical wide-angle thermal camera, which has implications for initial target acquisition. Operators need to use the visible channel (which has the zoom capability) to locate a target before the thermal channel provides useful detail. Sequencing the operator workflow around this reality was a design consideration we had not fully anticipated at the outset.

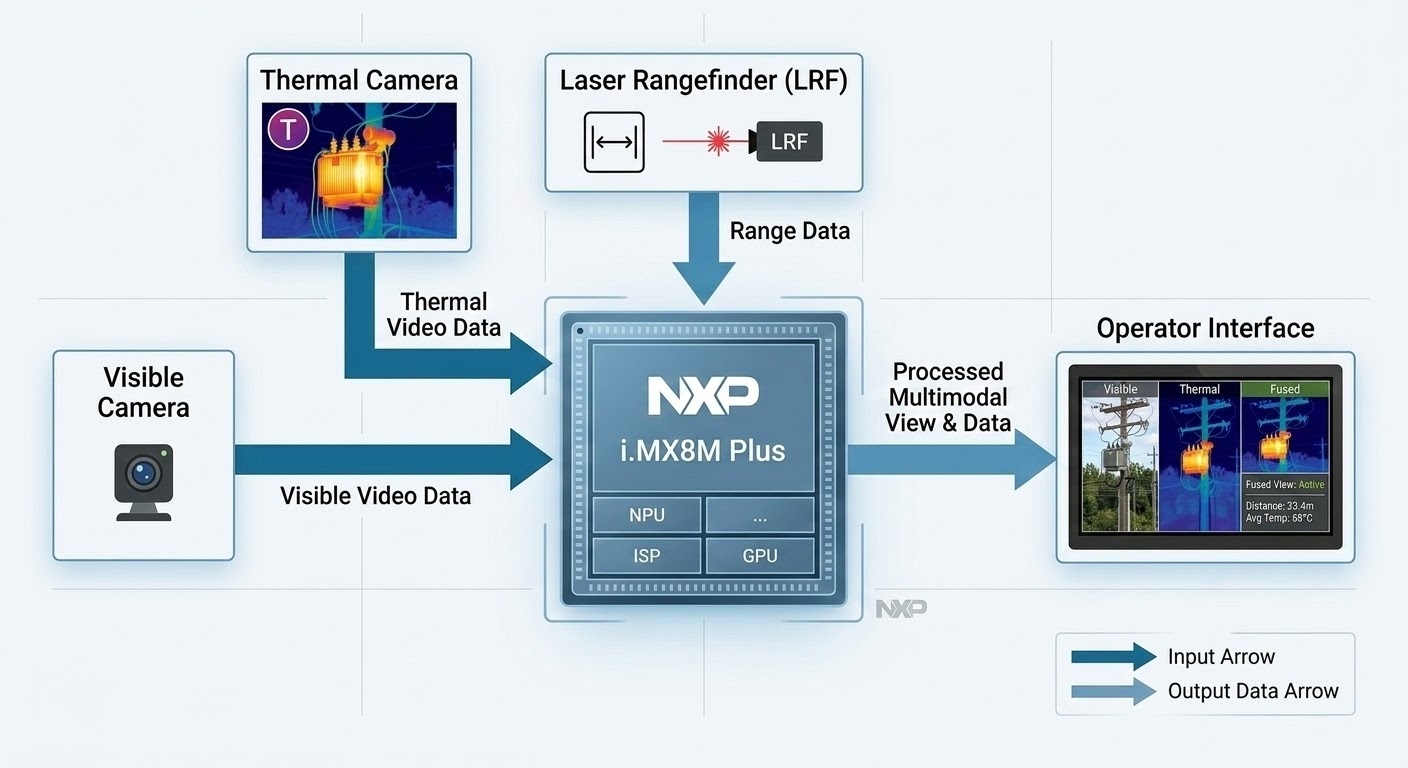

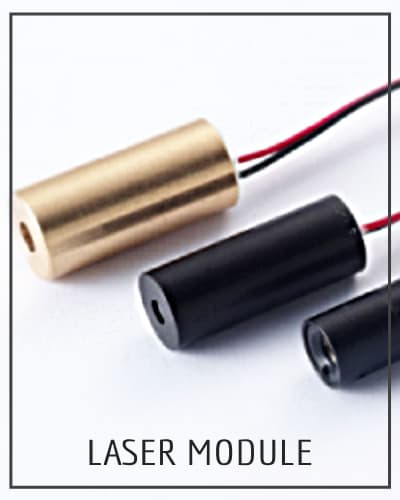

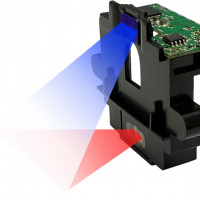

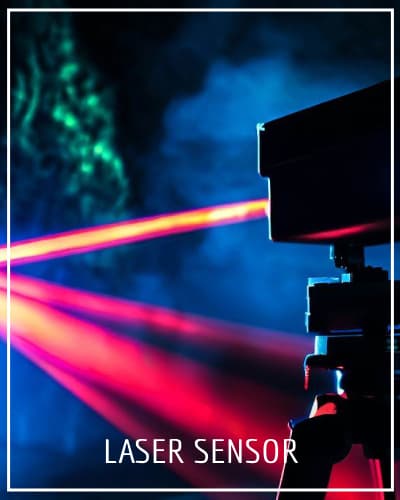

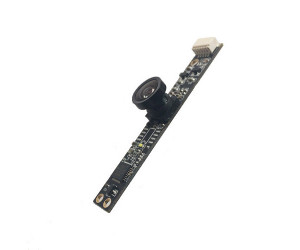

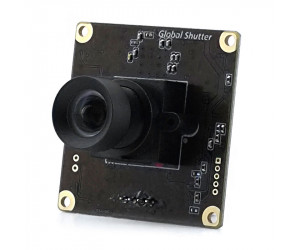

Laser Rangefinder: LRFX00M3LS

The LRFX00M3LS provides slant distance and angle sensing. Integrating the rangefinder raised a calibration consideration worth noting: the laser boresight, the visible camera optical axis, and the thermal camera optical axis are physically separated. While parallax decreases with range, the system still requires careful calibration across its operating envelope to ensure the overlay alignment remains accurate for the specific mounting geometry.

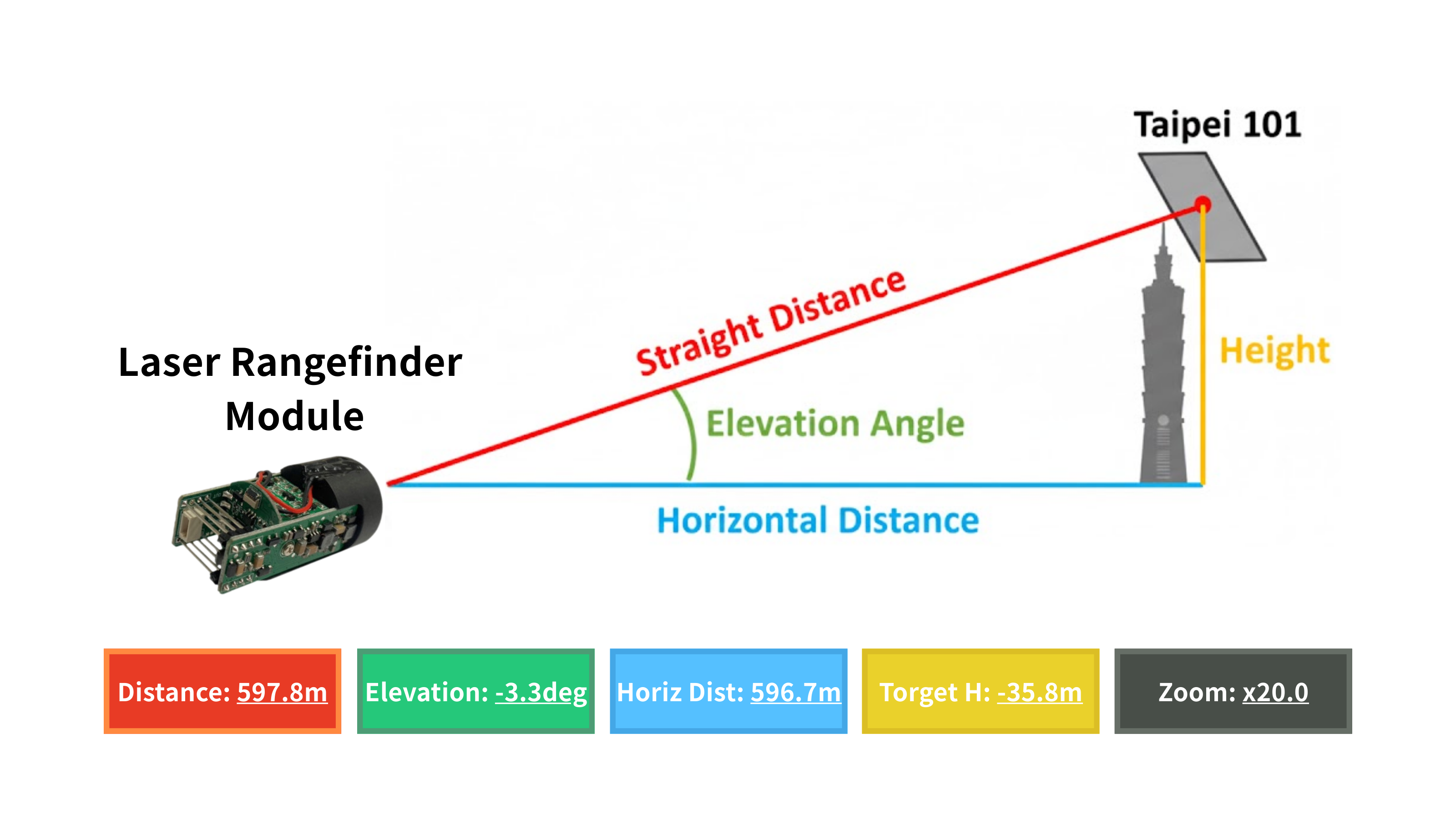

Measurement Framework

The core measurement pipeline takes the slant distance D and elevation angle θ to derive spatial parameters:

- Horizontal Distance: H = D × cos(θ)

- Target Height Difference: Δh = D × sin(θ)

The engineering interest is in getting clean, reliable inputs. Elevation angle accuracy is sensitive to platform levelness. If the platform is not level, the elevation angle contains a bias, which introduces a systematic error in the derived height.

What We Learned

A few observations that might be useful to teams approaching similar integrations:

- Synchronization is harder than it looks. Three sensors with different output rates, different interface protocols, and different latencies need a coherent timestamping scheme if the fused output is to be meaningful. We underestimated this initially and spent meaningful time on the firmware architecture to get it right.

- The operator experience is a real engineering problem. Multi-sensor fusion creates the possibility of information overload. Presenting thermal, visible, and measurement data simultaneously in a way that is interpretable under field conditions — not just technically correct — required iteration. The first version of our display was technically complete and practically cluttered.

- Edge AI headroom matters even when you are not using it yet. Choosing a platform with NPU capability meant we could prototype simple inference tasks (target detection, thermal anomaly flagging) without hardware changes. Even if those features are not in the initial release, the platform choice enables a credible roadmap.

- Thermal and visible are complementary, not redundant. We knew this going in, but the experience of working with both channels simultaneously — and seeing where each one is informative and where it is not — made it concrete. Design decisions that treat the thermal channel as a fallback for when the visible channel fails miss most of the value. The interesting cases are where both channels are active and telling different parts of the same story.

Potential Applications

The use cases that motivated this project — and where similar architectures would provide clear value — include:

- Infrastructure Inspection: Height and distance measurements of elevated structures without physical access; thermal anomaly detection on bridges, towers, and building envelopes

- Industrial Facility Monitoring: Equipment thermal profiling combined with precise spatial location of detected anomalies

- Perimeter and Area Surveillance: Long-range observation with confirmed distance measurement and all-conditions thermal detection

- Environmental and Terrain Survey: Non-contact geometric measurement of terrain features, cliff faces, or remote structures

In each case, the value proposition is the same: replacing a workflow that requires multiple separate instruments, manual data correlation, and post-processing with one that delivers coherent, actionable output in real time.

Closing Thoughts

This project was a useful exercise in multi-disciplinary integration — bringing together optoelectronics, embedded systems, real-time firmware, and applied measurement into something that works as a system rather than as a collection of capable components.

The hardware foundation — thermal imaging, laser ranging, high-resolution visible optics, and edge AI compute — reflects IADIY's core capabilities, and this integration is representative of the kind of sensor fusion work we support for clients tackling similar challenges. If your project involves combining optical sensing modalities with embedded computation, we are interested in the conversation.

Interested in thermal imaging modules, laser rangefinder integration, or AI embedded systems for your application? Get in touch with our engineering team.

-300x250h.jpg)

Leave a Comment